Key findings at a glance:

- A single Character.AI message uses an estimated 0.24–0.30 watt-hours of electricity on 2026 hardware — down from 5–10 Wh in 2023

- A full 30-minute session uses roughly 25 watt-hours — comparable to charging a laptop once

- Water consumption per message is under 1 millilitre on modern liquid-cooled infrastructure

- The same session generates 170× more carbon in South Carolina than in Stockholm, depending on which data center handles your traffic

- Running a 7-billion-parameter model locally on your device cuts carbon by 93–96% compared to cloud AI

- Character.AI has published zero sustainability disclosures as of March 2026

Behind every AI conversation is a physical machine drawing real power and cooling water. Every exchange routes through racks of high-performance GPUs in data centers that draw continuous electricity, generate enormous heat, and require cooling systems that often consume freshwater.

As Character.AI has grown to over 100 million monthly users — many spending 25 to 45 minutes per session — questions about its environmental footprint have shifted from niche concern to mainstream search query. This guide provides the most complete, evidence-based answer currently available.

What “Character AI Environmental Impact” Actually Means

The phrase covers three distinct resource streams:

Electricity consumption — drawn by GPUs performing model inference (generating each response), plus overhead for networking, storage, and facility systems.

Water usage — consumed by cooling systems that prevent hardware from overheating. Modern data centers use evaporative or liquid cooling, which consumes water rather than simply recirculating it.

Carbon emissions — indirect emissions resulting from electricity generation, the carbon intensity of which varies widely by grid and by hour of day.

Character.AI is architecturally distinct from most AI tools. Where you might ask ChatGPT a one-off question and close the tab, Character.AI is designed for extended, relationship-style interaction — average sessions run 5–9× longer than comparable platforms. That engagement model is central to understanding why its per-user footprint warrants scrutiny, even when per-message costs are similar to competitors.

Infrastructure note: Following Google’s licensing of Character.AI technology and the departure of key founding engineers to Google DeepMind in mid-2024, the platform now operates primarily on Google Cloud infrastructure. This matters for environmental calculations because Google Cloud maintains a published power usage effectiveness (PUE) ratio of 1.09 — versus the industry average of 1.56 — and holds a stated goal of 24/7 carbon-free energy matching by 2030.

Energy: How Much Electricity Does One Conversation Use?

The most widely cited 2023–2024 figure for AI energy consumption — 10 watt-hours per query — was based on early GPT-4 class models running on NVIDIA A100 hardware. That figure has fallen substantially since.

Two developments drove the change. First, NVIDIA’s Blackwell B200 GPUs deliver up to 25× better energy efficiency for inference workloads compared to the H100 generation, and up to 4× faster training and 30× faster inference compared to H100, thanks to fifth-generation Tensor Cores with FP4 support and doubled memory bandwidth. These chips began widespread cloud deployment in late 2025. Second, model quantisation — compressing model weights to 4-bit or 8-bit formats with minimal quality loss — has become standard practice, further reducing per-token compute requirements.

Energy per AI text message — hardware generation comparison

| Hardware era | Chip | Estimated Wh per message | Deployment status (2026) |

|---|---|---|---|

| 2021–2023 | NVIDIA A100 | 5–10 Wh | Legacy / phasing out |

| 2023–2024 | NVIDIA H100 | 1.5–3 Wh | Transitioning |

| 2025–2026 | NVIDIA Blackwell B200 | 0.24–0.30 Wh | Current standard |

One Character.AI message at 0.24–0.30 Wh is roughly equivalent to loading three standard webpages. A 100-message session uses approximately the same electricity as charging a phone from empty to full.

Why Session Length Changes the Calculation

Per-message efficiency is only part of the picture. Character.AI’s average session involves 80–150 messages over 25–45 minutes, according to user behaviour data cited in the company’s investor materials. Aggregated across a full session:

Estimated energy per session — Character.AI versus comparable activities

| Activity | Duration | Estimated energy (Wh) |

|---|---|---|

| Character.AI session | 30 min, ~100 messages | 24–30 Wh |

| ChatGPT session | 8 min, ~8 messages | 2–4 Wh |

| Laptop charge cycle | — | 30–60 Wh |

| HD video streaming | 30 min | ~150 Wh |

| Gaming PC | 30 min | 250–500 Wh |

“A 30-minute Character.AI session uses less energy than a laptop charge cycle — but far more than a Google search. The engagement model, not the underlying technology, is the variable that matters.”

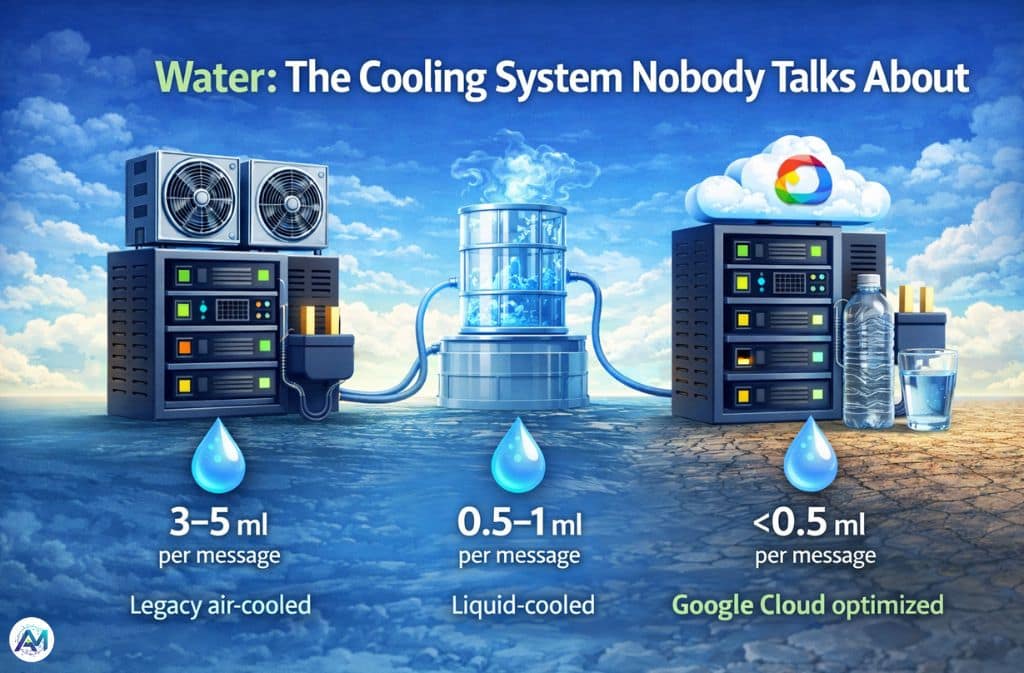

Water: The Cooling System Nobody Talks About

Energy gets most of the attention in AI sustainability coverage. Water deserves more of it.

Modern GPUs produce enormous heat. Removing that heat efficiently requires cooling systems, and the most energy-efficient method — evaporative cooling — consumes water through vapour loss rather than simply circulating it back through the system.

According to the IEA’s Energy and AI report, global data center electricity consumption is set to more than double to around 945 TWh by 2030 — slightly more than Japan’s entire current electricity consumption. The water infrastructure required to cool that expanded capacity at current ratios represents one of the most underreported resource challenges in the AI buildout.

A peer-reviewed study by Li et al. (2023) published at the University of California Riverside was among the first to quantify AI-specific water consumption, estimating that a conversation of 20–50 questions with an early GPT-3 model consumed roughly 500 ml of water. More efficient 2026 infrastructure has reduced this substantially.

Estimated water use per AI interaction (2026)

| Cooling system type | Water per message | Per 30-min session |

|---|---|---|

| Legacy air-cooled facility | 3–5 ml | 300–750 ml |

| Modern liquid-cooled (2024) | 0.5–1 ml | 50–150 ml |

| Google Cloud optimised (2026 est.) | <0.5 ml | <75 ml |

| Local on-device (Edge AI) | 0 ml | 0 ml |

The Water–Energy Trade-Off

There is a genuine engineering tension here. Liquid and evaporative cooling systems use more water but less electricity. Air cooling uses no water but requires more power for fans and chillers. Google’s own 2024 Environmental Report acknowledges this trade-off and describes efforts to use recycled water and non-potable sources at new facilities — noting that 86% of freshwater withdrawals came from sources at low or medium risk of water depletion or scarcity.

The geographic water crisis: Many AI data centers are built in arid regions where land is cheap and solar power is abundant — Texas, Arizona, Nevada. The Texas Water Development Board projects that data centers could account for 2.7% of the state’s total water use by 2030, creating direct competition with agriculture and residential users in drought-stressed regions.

Carbon Emissions and the “Carbon-Neutral Cloud” Caveat

Character.AI runs on Google Cloud. Google has claimed carbon-neutral operations since 2017 through renewable energy purchases and carbon offset credits. On the surface, this suggests a low-carbon platform. The reality is more layered.

First, “carbon neutral” relies on offsets — payments that fund emissions reduction elsewhere, not physical elimination at source. Google’s own region-by-region carbon data shows that the 24/7 carbon-free energy goal — matching consumption hour-by-hour with carbon-free generation — remains a 2030 target. In high-demand US regions, carbon-free energy matching varies dramatically by location, with some facilities still drawing substantially from fossil-fuelled sources during evening peak demand hours.

Second, manufacturing emissions are not included in operational carbon accounting. Producing the specialised AI accelerators powering Character.AI involves mining rare earth materials and fabrication processes with significant embedded carbon — costs that are rarely attributed to per-conversation footprint estimates.

Estimated CO₂ per Character.AI Session by Google Cloud Region

| Region | Location | Grid carbon intensity (gCO₂/kWh) | Carbon-free energy % | CO₂ per 30-min session |

|---|---|---|---|---|

| europe-north2 | Stockholm | 3 | 100% | ~0.1 g |

| northamerica-northeast1 | Montréal | 5 | 99% | ~0.2 g |

| us-west1 | Oregon | 79 | 87% | ~2 g |

| us-east4 | N. Virginia | 323 | 62% | ~8 g |

| us-east1 | S. Carolina | 576 | 31% | ~14 g |

| asia-south1 | Mumbai | 679 | 9% | ~17 g |

Geography: Not All Data Centers Are Equal

The table above makes a stark point: the same conversation generates roughly 170× more carbon if routed through South Carolina versus Stockholm. Your digital footprint is partly determined by a routing decision made by infrastructure you cannot observe or control.

Character.AI does not offer users a region selection option. Traffic routes to the fastest or least-congested data center, not the cleanest. For a US-based user, that most commonly means Northern Virginia’s “Data Center Alley” — over 665 facilities consuming approximately 24 TWh annually — which runs at 62% carbon-free energy, below the US average for comparable clean energy commitments.

Character AI vs. ChatGPT vs. Streaming Video

For individual users, Character.AI’s footprint is modest compared to video streaming or gaming. The per-session energy of roughly 25 Wh sits far below the ~150 Wh required for 30 minutes of HD streaming, and a fraction of the 250–500 Wh drawn by a gaming PC over the same period.

The problem is scale and the engagement model. ChatGPT, with approximately 2.5× more monthly users, generates lower aggregate energy demand because average sessions are 5–8 minutes rather than 25–45. The Character.AI platform as it exists now — optimised for sustained companionship rather than transactional queries — is a fundamentally different infrastructure challenge than it was at launch, and comparing current footprint to earlier versions shows how rapidly that challenge has grown.

Platform-Level Environmental Comparison (2026 Estimates)

| Metric | Character.AI | ChatGPT |

|---|---|---|

| Monthly active users | ~100 million | ~246 million |

| Avg. session length | 25–45 min | 5–8 min |

| Messages per session | 80–150 | 4–12 |

| Energy per session | ~25 Wh | ~3 Wh |

| Sustainability disclosure | None published | Partial (via Microsoft) |

| Cloud provider | Google Cloud | Microsoft Azure |

| Public carbon targets | None published | Carbon-negative by 2030 (Microsoft) |

The Edge AI Alternative: 90%+ Lower Footprint

The most significant development in AI sustainability in 2026 is not corporate pledges or carbon offsets. It is the maturation of on-device AI — running language models locally on the Neural Processing Units now standard in flagship laptops and smartphones.

Devices with Intel Core Ultra chips (40+ TOPS), AMD Ryzen AI 300 series (50 TOPS), and Apple Silicon (35–38 TOPS) can run quantised 7-billion-parameter models at practical conversational speeds. Free, open-source tools like Ollama and LM Studio make this accessible without technical expertise — Ollama runs models like Llama 3.2 3B or Gemma 3 4B with a single command on any 2022+ laptop with 8GB RAM.

Cloud AI vs. Local Edge AI — Environmental Comparison for a 30-Minute Session

| Metric | Character.AI (cloud) | Local 7B model (Edge AI) | Reduction |

|---|---|---|---|

| Energy | ~25 Wh | 1.5–3 Wh | ~90–94% |

| Water | 50–150 ml | 0 ml | 100% |

| Carbon (avg. US grid) | 5–14 g CO₂ | 0.3–0.9 g CO₂ | ~93–96% |

| Data privacy | Data leaves device | Fully local | — |

The trade-off is real: local models lack cross-session memory, have smaller knowledge cutoffs, and cannot match the personality consistency of a purpose-trained cloud platform. But for users whose primary use case is companionship-style conversation rather than knowledge retrieval, a well-prompted 7B model covers most needs at a fraction of the environmental cost.

Why Character AI’s Silence on Sustainability Matters

As of March 2026, Character.AI has published zero environmental impact reports, no water usage disclosures, no carbon reduction targets, and no Science Based Targets initiative commitments. Every major AI platform of comparable scale publishes at least partial data:

- OpenAI / Microsoft: Committed to carbon-negative operations by 2030; Microsoft publishes an annual Environmental Sustainability Report with per-service energy benchmarks.

- Anthropic: Operates on Google Cloud and references Google’s carbon-free energy programme in its Responsible Scaling Policy.

- Google DeepMind: Covered under Google’s comprehensive water-positive-by-2030 and 24/7 carbon-free energy commitments.

- Meta: Published an AI Sustainability Roadmap in 2024 with per-model energy benchmarks.

This gap matters beyond optics. The IEA projects that AI will account for 35–50% of all data center power consumption by 2030, up from 5–15% in recent years — and regulatory pressure is mounting to match. The EU AI Act requires energy consumption disclosure for high-capability AI systems. Germany now mandates a maximum PUE of 1.2 for new data centers by 2026 under its Energy Efficiency Act. The proposed US Data Center Efficiency Act would require water and energy reporting for large facilities. Character.AI’s current posture of zero disclosure is increasingly difficult to sustain.

The offset dependency: Character.AI implicitly relies on Google Cloud’s carbon-neutral claims to cover its operational footprint. But Google’s own data shows that “carbon neutral” in 2026 means offset-compensated, not physically zero-emission. The electricity powering Character.AI’s servers in Northern Virginia still comes from a grid that is 38% fossil-fuelled during peak demand hours. Offsets fund projects elsewhere; they do not un-burn the gas.

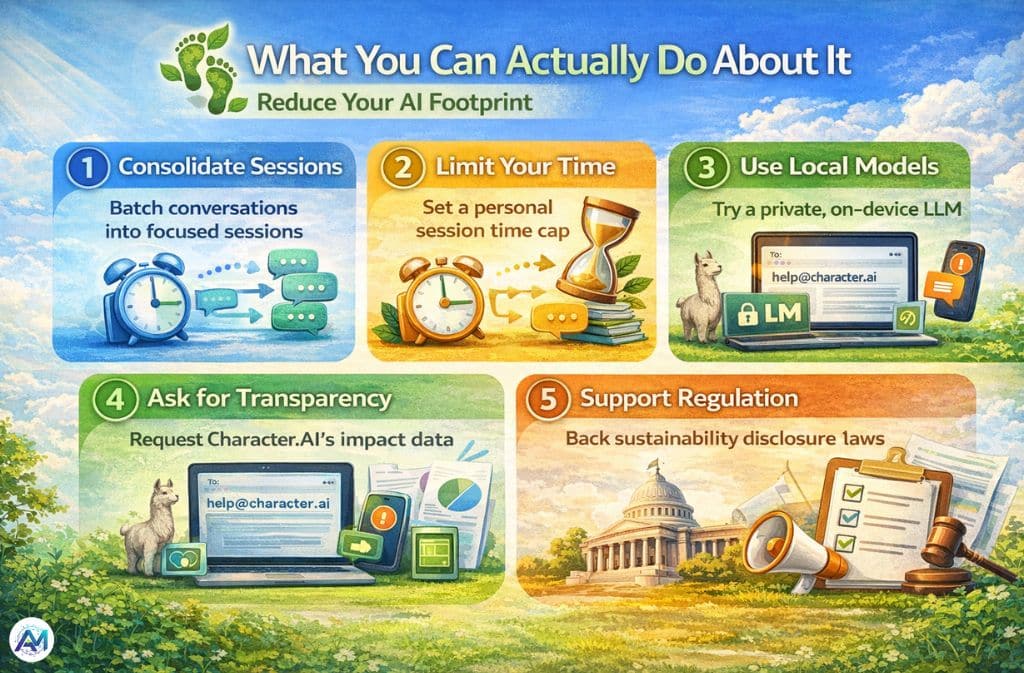

What You Can Actually Do About It

Individual action has real but bounded effect. The structural issue is platform design and mandatory disclosure. With that caveat, five evidence-based steps worth taking:

1. Consolidate your sessions. Cold-starting a model inference session carries overhead. Batching conversations into one focused session is more efficient than scattered micro-sessions throughout the day.

2. Set a time limit. Character.AI is designed to extend engagement. A 90-minute session uses roughly 3× the resources of a 30-minute one. A personal limit is the simplest lever available.

3. Try a local model for private conversations. Download Ollama (free, open source) and pull Llama 3.2 3B or Gemma 3 4B. Both run on hardware as modest as a 2022 laptop with 8GB RAM. Your data never leaves your device, and energy use drops by 90%+.

4. Ask Character.AI for transparency. Contact help@character.ai requesting publication of annual energy, water, and carbon metrics — specifically Scope 1, 2, and 3 emissions, WUE (Water Usage Effectiveness), and a 2030 reduction target. Aggregated user requests drive corporate disclosure.

5. Support regulatory transparency requirements. The EU AI Act’s implementation guidance and the US Data Center Efficiency Act both have active public consultation phases. Comment on consultations that include environmental reporting requirements for AI platforms.

FAQs

Q. Is Character AI bad for the environment?

For individual users, the impact of a single session is modest — roughly equivalent to charging a laptop once. The larger concern is platform-scale: 100 million users each spending 30–45 minutes daily generates aggregate demand that is significant and growing, with no public sustainability commitments from the company to offset it.

Q. How much energy does Character AI use per message?

Based on 2026 hardware benchmarks (NVIDIA Blackwell B200) and Google Cloud’s published PUE, a single text message is estimated at 0.24–0.30 watt-hours — roughly equivalent to loading three webpages. This is down significantly from 2023 estimates of 5–10 Wh, which were based on older A100 hardware.

Q. How much water does Character AI use per message?

Estimates for modern liquid-cooled data centers suggest under 1 millilitre per message. For a full 30-minute session, total water consumption is likely in the range of 50–150 ml. Precise figures are unavailable because Character.AI does not publish WUE data.

Q. Is Character AI worse for the environment than ChatGPT?

Per message, they are broadly comparable. Per user, Character.AI likely generates higher impact because sessions are 5–9× longer. ChatGPT has more total users but shorter, more transactional interactions. Neither company publishes per-service data sufficient for a definitive comparison.

Q. Does Character AI use renewable energy?

Indirectly. It runs on Google Cloud, which purchases renewable energy certificates and carbon offsets to claim carbon neutrality. However, the physical electricity grid powering specific data centers varies by location — Northern Virginia ran at 62% carbon-free energy in 2024. Google’s 24/7 carbon-free target is a 2030 goal.

Q. Can I run a Character AI-style chatbot locally with near-zero carbon?

Yes, with trade-offs. Tools like Ollama can run 3B–7B parameter models on most 2023+ laptops with 8GB RAM. Models like Llama 3.2 3B and Gemma 3 4B deliver coherent, personality-consistent dialogue for companionship use cases. Energy use drops by roughly 90–94% vs. cloud AI. Limitations: no cross-session memory by default, smaller knowledge base, less refined character consistency.

Q. Will regulations force Character AI to disclose its environmental impact?

Almost certainly, within two to three years. The EU AI Act includes energy disclosure requirements for high-capability AI systems. Germany already requires new data centers to achieve a maximum PUE of 1.2 by 2026. The proposed US Data Center Efficiency Act would mandate water and energy reporting for large facilities.

Related: AI Companions vs Human Loneliness: The Hidden Benefits — and the Quiet Risks