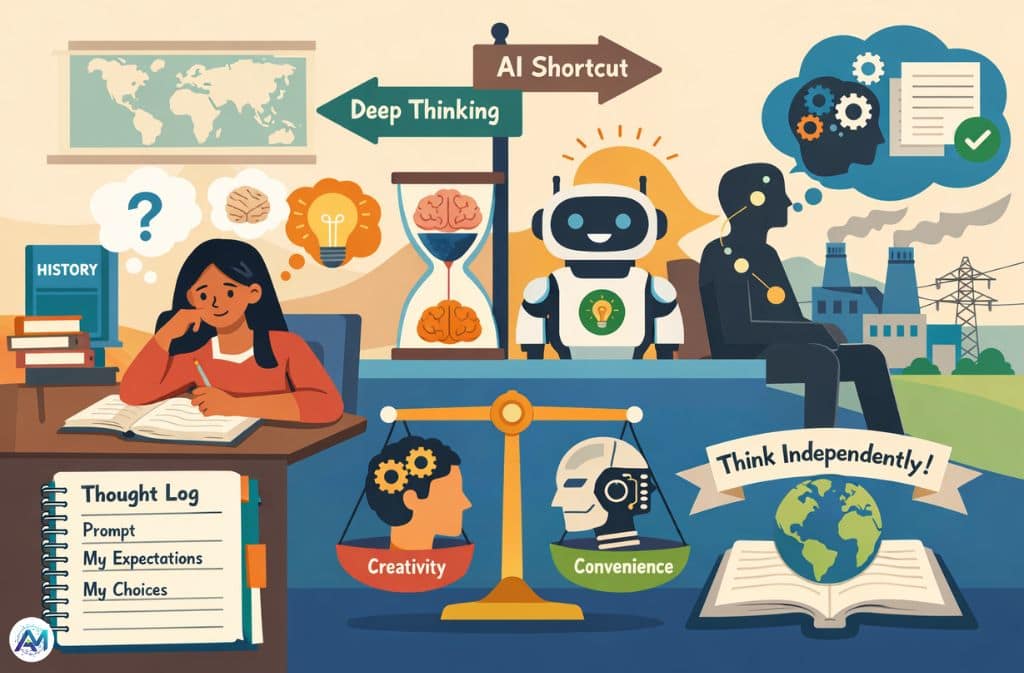

Last month, I watched a student named Mariam attempt to write a simple history paragraph. She typed a sentence, deleted it, tried again, sighed, and finally whispered what so many students say now:

“Why don’t I just let AI do this?”

But she didn’t.

She wrestled through the uncertainty, scratched out an outline, revised it twice, and only then asked an AI tool to check her grammar. The result wasn’t perfect, but it was undeniably hers — messy thinking shaped into meaning.

Moments like these reveal the core tension of 2026: Is artificial intelligence expanding students’ potential — or slowly reducing their ability to think without it?

With AI becoming ubiquitous across schools, workplaces, and creative fields, the answer depends less on the technology and more on the way we use it.

The Skill-Degradation Loop: When Convenience Interrupts Learning

For decades, cognitive scientists documented rising scores in verbal reasoning and logic — a trend known as the Flynn Effect. But recent data suggests a shift. A 2023 study examining nearly 400,000 Americans published in the journal Intelligence found consistent declines in verbal reasoning, matrix reasoning, and mathematical ability scores across age groups, education levels, and genders — a pattern that researchers describe as a measurable reversal of the 20th century’s cognitive gains.

Researchers from the Stanford Accelerator for Learning’s AI + Education program — which has funded over 30 interdisciplinary studies on AI and learning — identify a consistent concern: students who rely on AI as a first-draft generator show weaker structural writing skills, reduced retention of course content, and difficulty initiating tasks without external prompts.

This doesn’t mean AI is “making students dumber.”

It means skills don’t grow when cognitive friction disappears.

Writing strengthens the brain because it demands active recall, decision-making, and logical sequencing. When an AI system handles those parts, the student practices only editing, not thinking.

The mind grows through effort, not output.

The Cognitive Crutch Effect — and the Counter-Narrative We Forget

Where the Crutch Shows Up

Psychologists describe a modern pattern: students offload thinking onto AI before the thinking even starts.

This leads to:

- Initiation paralysis

- Formulaic reasoning

- Dependence on algorithmic structure

- Fading confidence in self-generated ideas

But here’s the nuance: offloading isn’t always bad.

The 2026 New Literacy Framework argues that cognitive offloading fuels higher-order thinking. We already offload spelling to spellcheck, math to calculators, and navigation to GPS. When used intentionally, AI removes mechanical friction, freeing the brain for interpretation, synthesis, and judgment.

The danger isn’t the tool.

The danger is using the tool before your own mind is engaged.

The Shrinking Creative Possibility Space

AI accelerates productivity but can narrow creativity.

When a model offers the first three ideas, most users choose one of them — a psychological phenomenon known as priming bias. Human creativity is nonlinear and chaotic. AI creativity is convergent and probability-driven.

Over time, this can push students toward safe, average, predictable work.

The risk is not creative collapse.

The risk is creative sameness.

The Hidden Environmental Cost Behind Every Prompt

AI feels weightless, but every generation request pulls from an enormous physical infrastructure.

According to researchers at UC Riverside, each 100-word AI prompt uses roughly 519 milliliters of water — approximately one standard bottle — for cooling the data center hardware processing the request. That figure may seem small in isolation, but with billions of queries processed daily, it accumulates rapidly. A 2025 Pew Research analysis drawing on International Energy Agency data found that U.S. data centers consumed 183 terawatt-hours of electricity in 2024 alone — more than 4% of the country’s total electricity consumption, and roughly equivalent to the annual electricity demand of Pakistan.

AI may be digital, but its footprint is undeniably physical.

The 2026 Framework: How to Use AI Without Losing Your Mind (Literally)

Below are the most effective, classroom-ready strategies emerging from educational research and HITL (Human-in-the-Loop) policy reforms.

1. The Trace File Strategy: Your New Thinking Companion

A Trace File is a simple but transformative tool.

Students keep a small “Thought Log” beside every prompt. Inside it, they record:

- What they wanted the AI to do

- What they expected the answer to look like

- What they accepted or rejected from the model

- The reasoning behind their choices

This builds metacognition, Directional Intelligence, information retention, and healthy skepticism. Instead of absorbing AI output, the student actively interrogates it.

2. The Proprietary 20/80 Cognitive Balance Rule

This is becoming a standard guideline in 2026 classrooms.

80% Human → 20% AI

Humans handle: logic, structure, arguments, interpretations, and personal voice.

AI handles: grammar, formatting, rephrasing, and quick explanations.

This rule ensures that AI supports thinking rather than replacing it.

3. Directional Intelligence: The Skill Every Student Must Master

In the AI era, “prompting” is no longer a technical ability — it’s a thinking skill.

The Stanford AI + Education Summit 2025 highlighted this shift directly: Victor Lee, Stanford’s faculty lead for AI + Education, raised the question of what it means to be genuinely “AI literate” — emphasizing not just using AI tools, but knowing when to use them, when to reject their outputs, and how to maintain intellectual ownership of your own work.

Directional Intelligence is the capacity to:

- Steer an AI toward nuanced ideas

- Reject shallow or generic outputs

- Refine questions based on context

- Maintain authorship and intention

Students with high Directional Intelligence outperform AI-dependent peers across writing, reasoning, and creative tasks. This is the literacy of 2026.

4. Visual Hierarchy: Make Ideas Scannable for Humans and AI

Modern search engines — including platforms run by Google and emerging AI-first engines — prioritize highly structured writing. Using tables, lists, clear headers, framework names, and comparison grids makes your work easier for both human and machine readers to understand.

Deep Work vs. Surface Synthesis

| Feature | Human-Only (Deep Work) | AI-Augmented (Surface Synthesis) |

|---|---|---|

| Logic Construction | Builds neural pathways | Borrows model logic |

| Retention | High (active recall) | Low (passive copying) |

| Creativity | Nonlinear, surprising | Convergent, predictable |

| Speed | Slow but meaningful | Instant but shallow |

| Mental Effort | High | Low |

Are You Becoming AI-Dependent? A Quick Self-Test

If you notice these signs, your cognitive muscles may be weakening:

1. You can’t start a task without prompting a model first.

Initiation ability is the first casualty.

2. You forget information faster when AI summarizes it for you.

Passive intake = zero memory consolidation.

3. Your writing feels polished but no longer feels like you.

This is an early marker of over-offloading.

The Key Takeaway: AI Should Expand You, Not Replace You

The debate over AI isn’t a battle between optimists and skeptics.

It’s a conversation about intentionality.

Use AI to remove friction — not to remove thinking.

Use it to refine your ideas — not to generate them wholesale.

Use it to accelerate your work — not to outsource your mind.

Artificial intelligence won’t be our downfall.

But losing the ability to think independently might be.

The future of learning will not be determined by machines.

It will be determined by the choices humans make while using those machines.

Related: AI De-Skilling Is Reshaping the Job Market — Only Those with AI Skills Will Survive