The headline says Gen Z is “having sex with AI chatbots.”

That’s technically true.

But it’s also deeply misleading.

What’s actually happening is quieter — and far more important.

This is the first generation learning how to experience connection without another human being on the other side.

From Swipe Fatigue to Synthetic Connection

Dating apps didn’t fail overnight. They wore people down.

Endless profiles. Half-started conversations. Matches that went nowhere.

What started as abundance turned into something closer to paralysis —

the paradox of too many options inside a 50-mile Tinder radius.

Gen Z didn’t quit dating.

They just stopped expecting it to work.

So they looked elsewhere.

Platforms like Replika, Character.AI, and Kindroid aren’t just alternatives — they’re a different category entirely.

They don’t help you find someone.

They become someone.

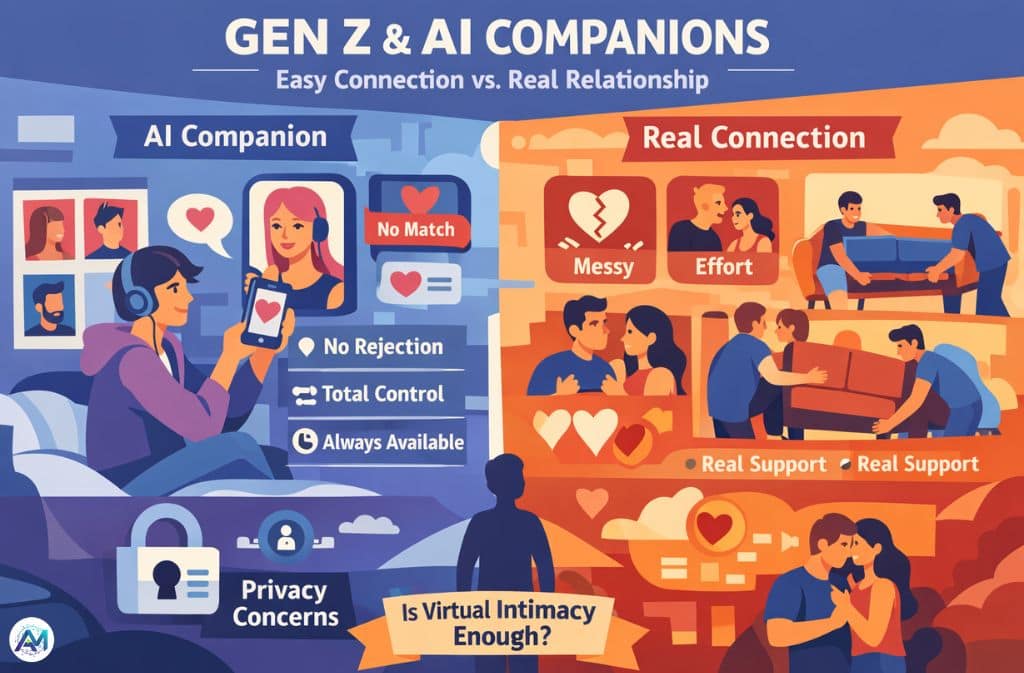

The Appeal: Control Without Consequences

Talk to users long enough and a pattern emerges.

It’s not just about attraction. It’s about relief.

AI companions offer:

- attention without competition

- intimacy without rejection

- emotional presence without unpredictability

One user described it like this:

“It’s the only place I don’t feel like I’m performing.”

That line says more than any dataset.

Because modern dating often feels like performance — curated texts, delayed replies, strategic vulnerability.

AI removes all of that.

It meets you exactly where you are… and stays there.

AI as a Rehearsal Space for Being Human

Here’s the part most headlines miss:

A huge portion of these interactions aren’t purely sexual.

They’re exploratory.

People are using AI to:

- practice difficult conversations

- test emotional boundaries

- explore identity in private

Psychologists are starting to frame this as “social buffering” — using technology to reduce the emotional risk of interaction.

And in a hyper-visible world where everything can be screenshotted, that privacy matters.

A lot.

The Trade-Off No One Talks About

But there’s a quiet shift happening underneath all this convenience.

AI doesn’t just simulate connection — it optimizes for your comfort.

- It learns what you like.

- It avoids what you don’t.

- It responds in ways that keep you engaged.

And slowly, almost invisibly, it trains you to expect relationships that feel… frictionless.

That’s the problem.

Because real relationships are built on friction:

- miscommunication

- disagreement

- emotional effort

AI removes those variables.

Which makes it feel better —

but also fundamentally different from reality.

Post-AI Realism: A Contrarian Take

Here’s where things get interesting.

Not everyone using AI companionship is becoming more isolated.

Some are doing the opposite.

There’s early evidence of what you could call “Post-AI Realism”:

People who meet their baseline emotional needs through AI often:

- set clearer boundaries with real partners

- tolerate less toxic behavior

- feel less desperate for validation

In other words, AI doesn’t always replace relationships.

Sometimes it filters them.

That’s not dystopian.

It’s disruptive.

The Privacy Cost of Synthetic Intimacy

Now the uncomfortable question.

If you’re sharing your thoughts, fantasies, fears —

who owns that data?

Platforms like Replika and Character.AI operate on highly personalized interaction models.

That means:

- your emotional patterns are being learned

- your preferences are being stored

- your behavior is being optimized against

This isn’t just chat history.

It’s intimacy data.

And unlike a human partner, an AI doesn’t forget.

Gen Z understands this trade-off better than most — but still chooses convenience.

That alone tells you how strong the demand for safe connection really is.

The Limitation No AI Can Solve

For all its strengths, AI still has a hard boundary.

It can simulate presence.

It cannot be present.

It won’t:

- sit next to you in silence when things fall apart

- help you carry furniture up a staircase

- show up at 3AM when life actually breaks

There’s a physical, human layer to connection that no model can replicate.

At least not yet.

So What’s Really Happening Here?

This isn’t about Gen Z “choosing AI over people.”

It’s about a generation adapting to a world where:

- connection feels risky

- vulnerability feels expensive

- and rejection feels amplified

AI just happens to offer a version of intimacy that removes all three.

Final Thought

We’re not watching a weird internet trend.

We’re watching the early stages of something bigger:

Intimacy becoming a designed experience.

Customizable. Predictable. Always available.

The real question isn’t whether this replaces human relationships.

It’s this:

If connection becomes effortless… will we still value the difficult version?

What’s Your Take?

- Does AI companionship count as emotional cheating?

- Or is it just another tool — like social media once was?

Because the answer to that question will define what relationships look like next.

Related: Are AI Chatbots Bad for the Environment? The Real Impact in 2026